🔮 Ten things I’m thinking about AI

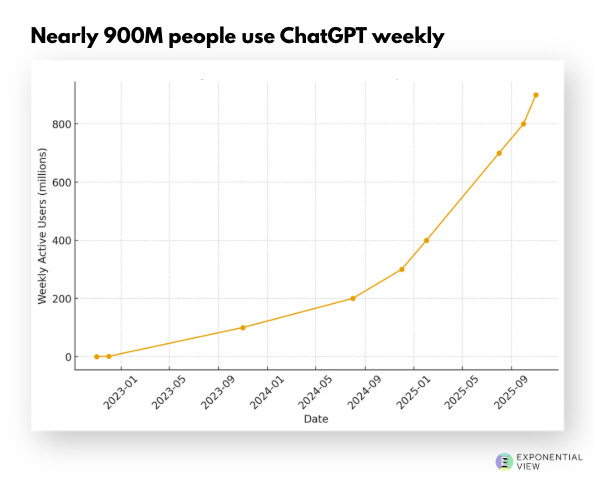

As we approach ChatGPT's 3rd birthday, I share my latest views.

On Sunday, 30th November, ChatGPT turns three. Wild three years.

It’s triggered a dramatic race in research. Before ChatGPT launched, the big US labs released a major model every six months. After ChatGPT, releases picked up to one every two weeks.