🔮 Exponential View #563: The Citrini craze; human cognition; the most aggressive AI regulation; OpenAI spikes; COBOL returns; bye‑bye tax filing++

You offer a clarifying framing to what has changed in just the past 25 years, and for grasping both the scale and depth of that transformation. — Danie H., a paying member

Hi all,

Welcome to the Sunday edition, in which we make sense of the week behind us.

Enjoy!

The future of human cognition

This past week, I convened the first AI Vistas dialogue, designed so that even the experts in the room learn by engaging with each other on one hard question. In our first dialogue, I explored Are we in charge of our AI tools or are they in charge of us? with Nicholas Thompson, Eric Topol, Rohit Krishnan and Nita Farahany. A few themes that stood out to me:

Autonomy is no longer simply “I chose this,” but “I chose this with a cognitive exoskeleton that is steerable, hackable, and designed by others.”

Everyone has a generative core that must be protected from offloading to AI. We should all understand where our best thinking happens and ring-fence it from automation.

As AI takes over the “visible” work, human institutions must focus on invisible work: building attention, intuition, social and embodied skills.

The billion-dollar overreaction

Citrini, a research shop, published a speculative Substack post about the logical extreme of where AI disruption might take us. Their 2028 scenario has agentic tools letting developers clone mid-market SaaS in weeks, collapsing software pricing power overnight. This alarms other firms, which cut white-collar headcount and double down on AI. The dynamic continues to eat away at the demographic responsible for 75% of discretionary consumer spending. By 2028, they suggest unemployment at 10.2%, the S&P down 38%.

It sounds like a catastrophe. Citrini’s piece had a 3.4-to-1 ratio of negative/crisis words to positive ones, with 198 subjective/emotional words. It was designed to elicit an emotional response. And it did.

I take two issues with their essay – Citrini assumes no adaptation and that everything happens everywhere all at once. As investor Gavin Baker observed, the scarcity of watts and wafers will significantly slow the scenario. He estimates we’d need 1,000x more compute than we have today for anything resembling Citrini’s scenario. AI firms already face a compute crunch (as we argued here). This may raise the cost of compute and make human labor much more cost-competitive. My hunch is this, and other factors, like data center protests, will attenuate the rate of diffusion.

Citrini, of course, is not all wrong. The first flywheel has kicked off – cheap, fast software is already undercutting mid-market SaaS. But this focuses on the wrong part of the story. This month, someone on our team replaced a $40/month SaaS product in a couple of hours by building something that actually worked how they work. In this case, it’s not a job loss but a capability gain. We need to look at the gains and the pinch points of the transition. The collateral damage of railroads cannot be ignored, but nor can the fact that they didn’t just kill the canal companies; they also created an entirely new geography of economic possibility.

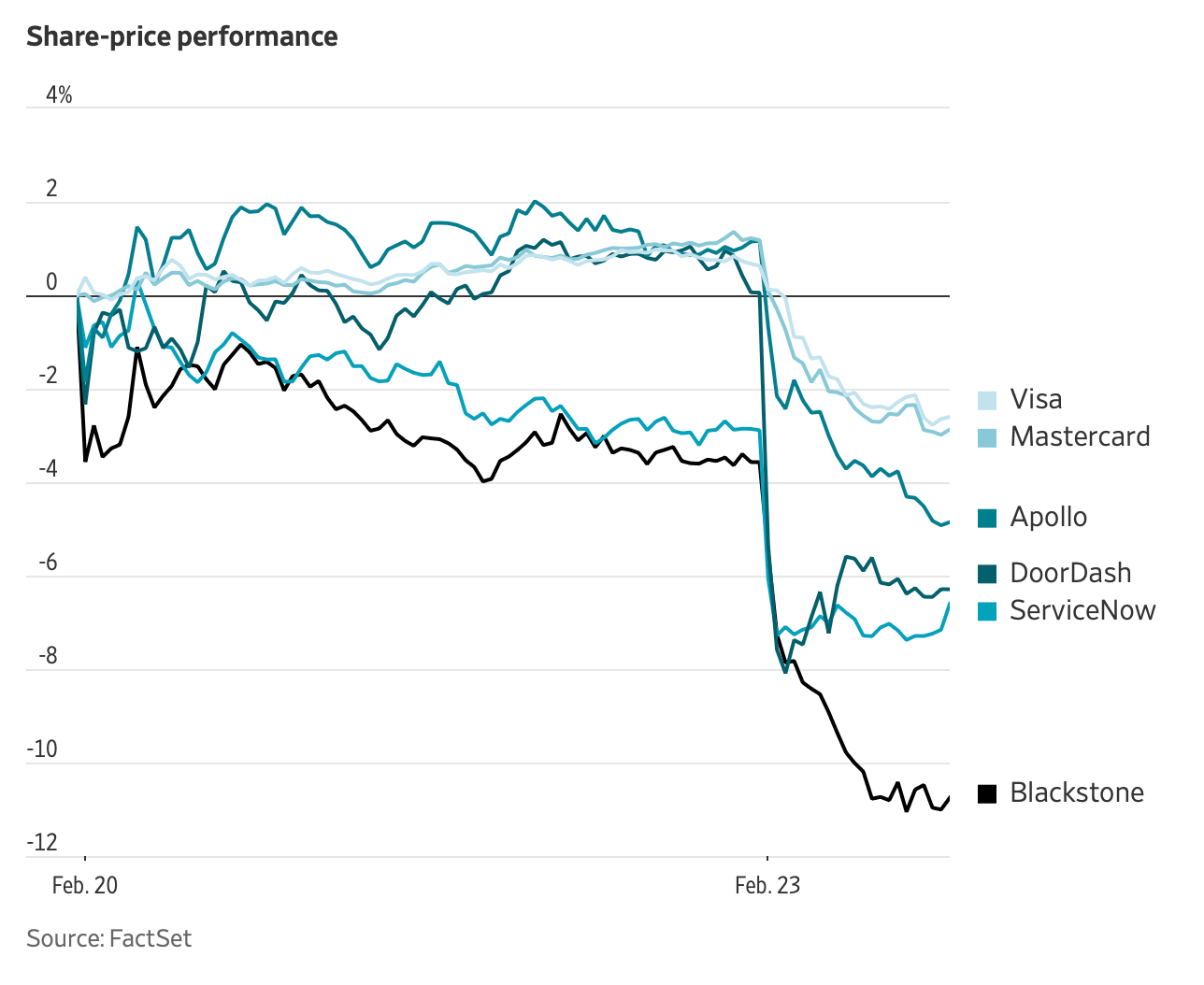

In creative destruction, markets always show what is destroyed first. And the markets are selling the canal phase of an AI transition that, like railroads, will destroy visible incumbents while building new economic possibilities. But that destruction will be slowed by the compute crunch, which buys time for adaptation that Citrini doesn’t consider.

See also:

Anthropic has released Claude for COBOL, an archaic software language still powering 43% of banking, 95% of ATMs, and $3 trillion+ in daily transactions. The average COBOL programmer is 64 years old. IBM dropped 13%.

Jack Dorsey is cutting Block’s workforce nearly in half, betting that AI tools paired with smaller, flatter teams is the way forward.

The most aggressive AI regulator in the world

This week, the US government became, by a significant margin, the world’s most aggressive regulator of artificial intelligence. Poor Europe is losing even this ignominious title.

Defence Secretary Pete Hegseth designated Anthropic a national security supply chain risk, effectively barring federal contractors from using its technology in government work. Hours later, Trump directed every federal agency to follow suit. No Chinese AI company has received the same designation. It’s quite an astonishing sucker-punch.

The proximate cause was Anthropic’s refusal to lift all safety constraints on military use of Claude, around autonomous targeting and AI-assisted mass surveillance. These aren’t unreasonable positions; they reflect genuine technical concerns about where AI capability ends and unacceptable risk begins. But the punishment for holding them was disproportionate, a tool designed for compromised semiconductor supply chains and foreign hardware manufacturers, repurposed to punish an American AI lab.

The deeper problem is the one I keep returning to – the laws governing this space were written for a different technological era. FISA was last substantially updated in 2008. Defence contracting frameworks assume software is a passive, bounded tool. Neither statute has a vocabulary for AI systems that can synthesise fragmented surveillance data across datasets in minutes, or for the specific danger zone Anthropic identified: capable enough for defence use, not safe enough for autonomous lethal decisions. Raw executive power rushed into that gap.