📈 Data to start your week: The AI capacity trap

AI has never been cheaper to access. It has also never been harder to use without hitting a wall.

Cheaper AI was supposed to ease the compute crunch. Instead, it made it worse. The Jevons paradox, applied to intelligence, means that every time the price of a token falls, demand rises faster than supply can scale.

But the labs can’t clear the queue by raising prices — their customers will defect to the next best alternative. So the compute crunch shows up where economists would least expect it. As we wrote yesterday, OpenAI is passing on opportunities, Anthropic is adjusting session limits for its Pro users and open-source models are being shut down.

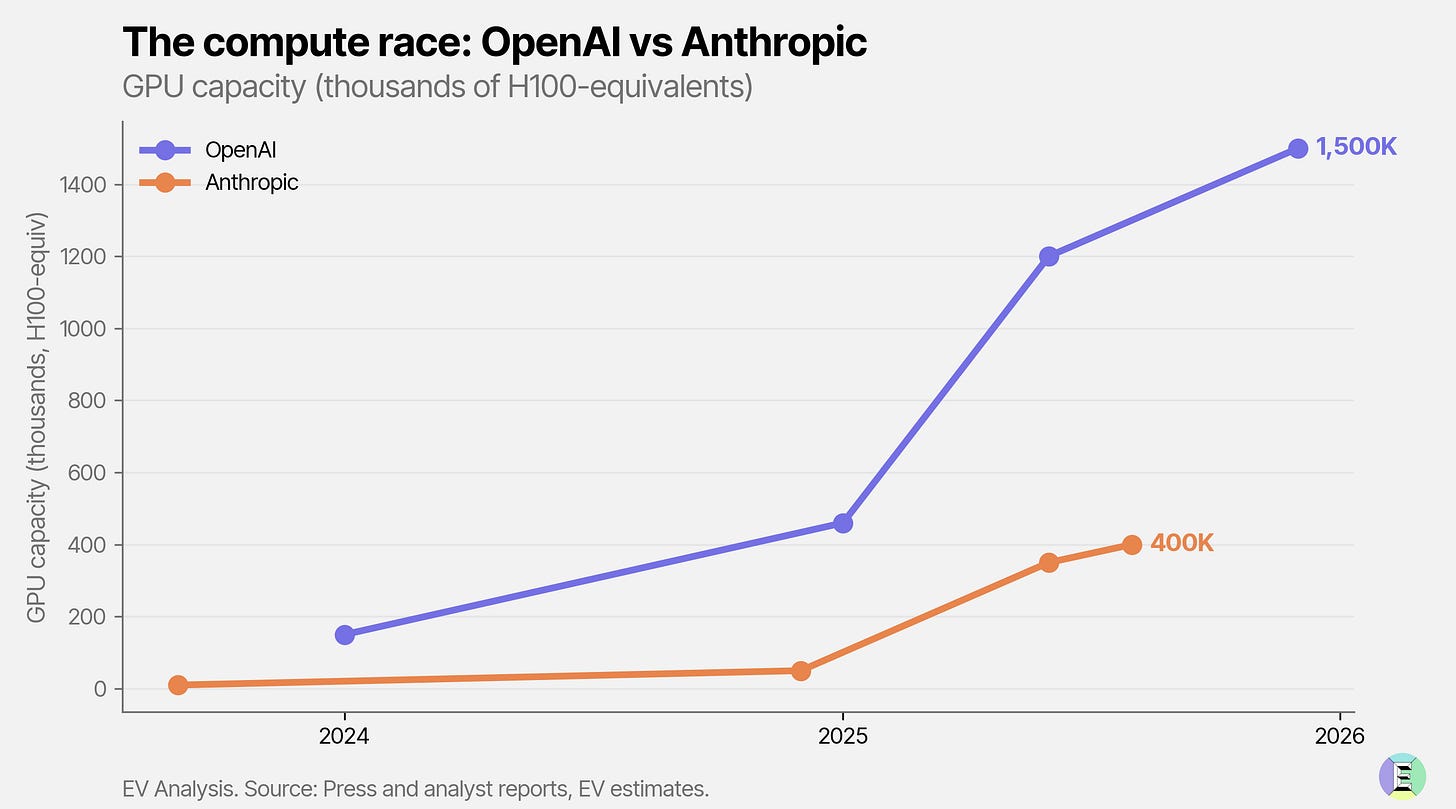

But first, let’s start with the demand. OpenAI’s APIs processed 6 billion tokens per minute in October 2025. By April this year, that had risen to 15 billion, a 2.5x increase in five months. Both OpenAI and Anthropic are in a race to maximise compute to meet demand.

That relentless load is why Google’s TPUs across all seven generations, some now seven and eight years old, are running at full utilization. Hardware that was expected to have depreciated years ago is still earning its keep.

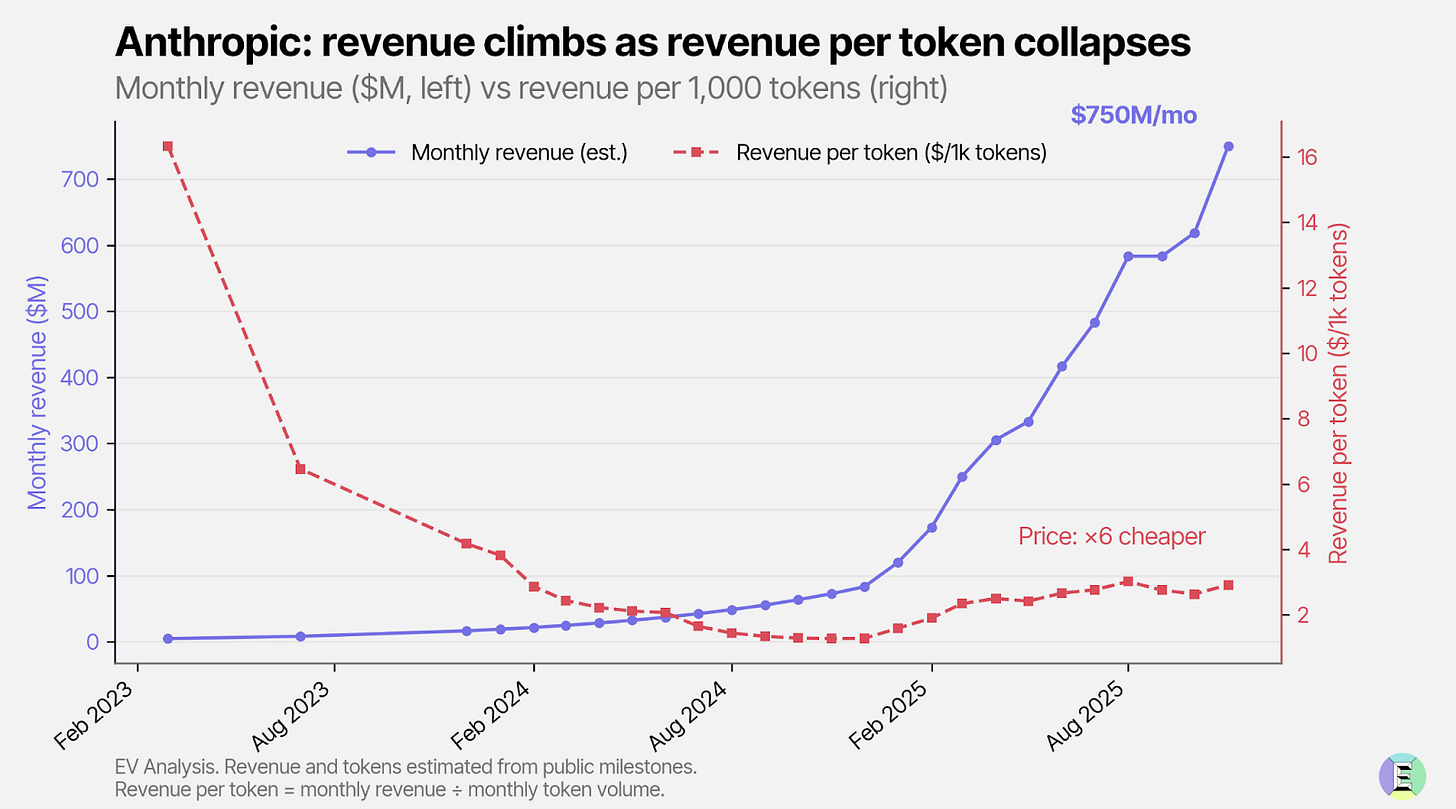

The pressure shows up in revenue. For instance, Anthropic’s total revenue is growing, but the price per token is falling – and it’s falling faster than overall revenue is rising. This means the business is becoming increasingly dependent on volume to sustain growth.

Users are feeling the squeeze, too. Across every major AI platform usage allowances tightened last year – more tiers, stricter limits, changes that often showed up without notice.