🔮 Why I changed my mind about Apple

AI’s most important hardware company?

I hold my opinions firmly, but when the facts change, so do I. Today I want to tell you why I changed my mind on Apple and AI.

Apple has been conspicuously slow to deliver on AI. Unlike its peers, it isn’t spending hundreds of billions on data centers. Capital expenditure has been reasonably flat. Siri, the feature we all love to turn off, hasn’t meaningfully improved in a decade. No one expects major breakthroughs from Apple in research AI or applied AI in the near future.

John Gruber, the most widely read Apple analyst, called a WWDC demo “a concept video.” Analyst Ben Thompson said Apple was nowhere near the cutting edge. I was in this group.

But what I missed was that every single day, as I was hammering away at ChatGPT or Claude, switching between models, I was doing it through an Apple device. The model I used changed constantly but the device did not. This only really hit me once OpenClaw arrived.

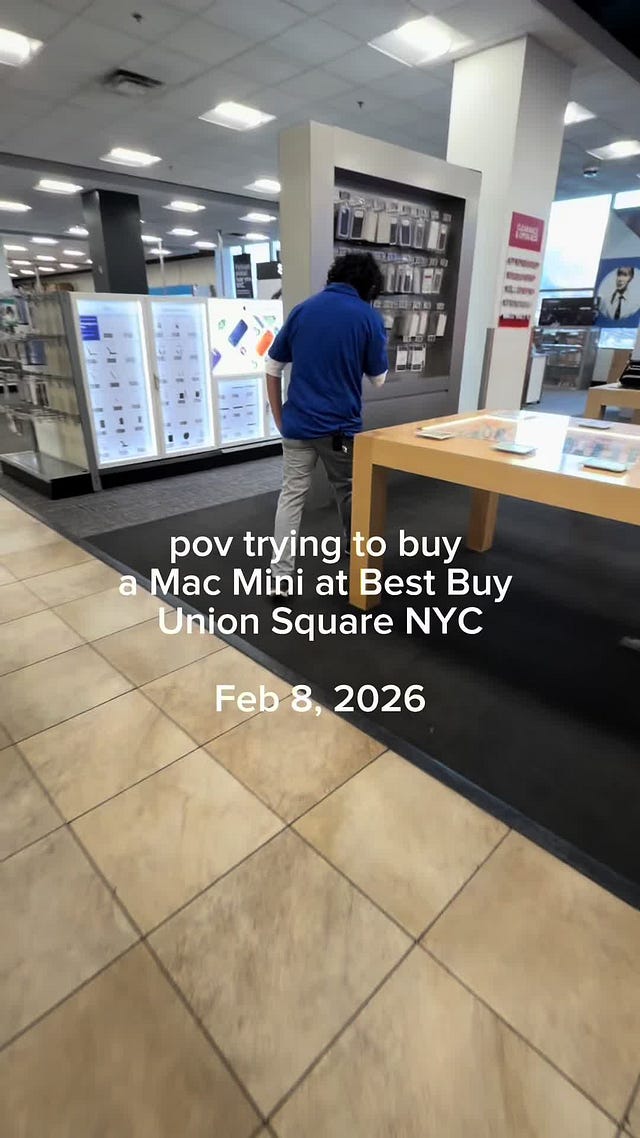

The empty shelves at Best Buy

As soon as it launched, I started running OpenClaw agents. I put my first OpenClaw on the small Mac Mini I keep at home. Within a week, I was running it so hard the audio system and our CCTV cameras had stopped working reliably. The agent was consuming everything the machine had. So I bought a new Mac Mini specifically for the agent, R Mini Arnold. This week, Jensen Huang called OpenClaw “the new computer”. He didn’t mean a Mac Mini but for now, that’s what it runs on.

Slowly, delivery times for new Mac Minis stretched. A machine that arrived in three days when I bought mine in early February was now showing a seven-to-eight-week wait if you configured it with 64 gigabytes of RAM. The Mac Studio had similarly extended from two or three weeks to six or eight. Best Buy shelves ran empty.

At Exponential View, we bought a Mac Mini for Nathan Warren, who runs his own agents and a tricked-out Mac Studio that hosts the team’s shared agents. If a company of eight buys two machines for AI infrastructure, the equivalent for a company of 100,000 is 25,000 new computers added to their estate.

There is a structural reason behind all this. Demand for AI inference is extremely high. Data center capacity is constrained, utilization is high and demand is growing faster than chips can roll off the production lines. When the frontier is rationed, the relative appeal of running a model locally changes. The Mac Mini shortage is a demand story, driven by people and organizations doing the same calculation I did.

Why Apple hardware?

Apple’s chips use unified memory that is shared across the CPU, GPU and Neural Engine, with extraordinarily high bandwidth for a consumer device. The Neural Engine, which runs nearly 40 trillion operations per second, is optimized specifically for matrix multiplication. That matters because matrix multiplication is what transformer models, the architecture behind every major AI system, actually do. The whole stack was built for something else, but it turns out to be almost perfectly suited for running AI locally.

But the silicon is only one layer. Apple also controls the OS, the App Store and a privacy architecture. It is embedded within the enclaves, the software and the operating system. And because of that, they have something rare – genuine consumer trust. Think about everything you own, from your socks to your wallet. What do you touch the most? Your wedding band if you have one, your glasses if you have them and then an Apple device. That is the degree of consumer intimacy Apple has built.

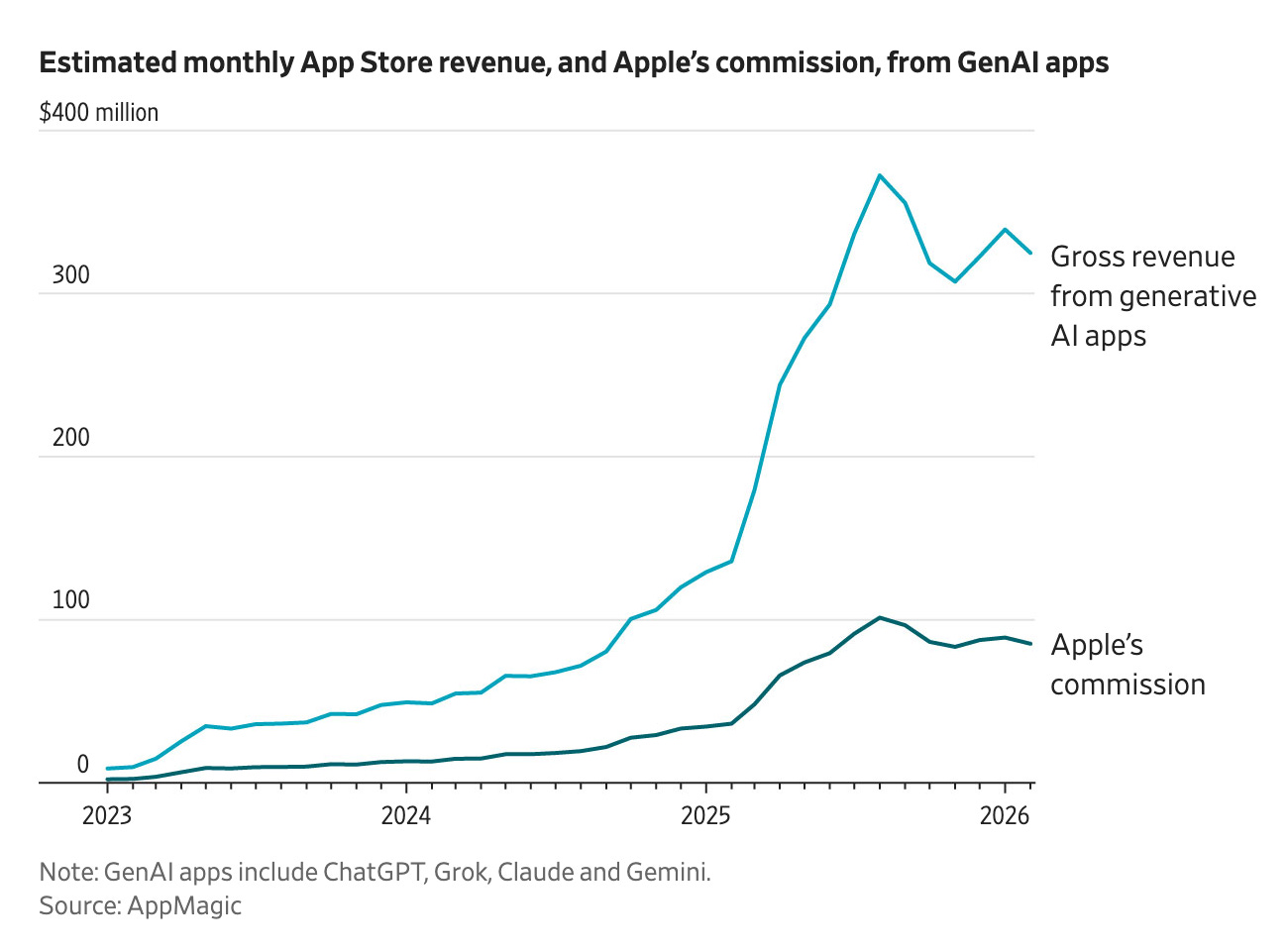

The consequence is a capture mechanism that Apple has been running for years and years without anyone calling it an AI strategy. Every third-party model ends up having to go through something Apple controls and that includes the App Store. They capture the value of those relationships even when they don’t own the model.

The 4K ceiling

When television manufacturers hit 4K resolution, a few pushed further. 8K sets appeared in showrooms, some people bought them. But the human eye can’t resolve detail above roughly 4K at normal viewing distances and the industry found its natural ceiling. No one is seriously building 16K TVs.

I think AI is approaching the same thing – not at the frontier, but for the tasks that actually fill most people’s days. Dario Amodei describes a “country of geniuses in a data centre,” Nobel Laureate-quality intelligence applied to every problem. That capability matters enormously for drug discovery, for scientific research, for genuinely hard reasoning tasks. But most of what we ask our AI is not that. For most daily tasks, you don’t need GPT-19.6. You need something closer to GPT-5.8 and through model distillation and efficiency improvements, that level of capability is already arriving on local devices.

When local is good enough for the task, the question is no longer which model but whose device? And Apple has been building that device, with the unified memory, the Neural Engine, the privacy enclave, the consumer trust for two decades.

Watch my full commentary on YouTube or listen here:

I suspect if Steve Jobs were alive he would have created an user experience that interfaced to an Apple LLM model which would blow our minds. He would have created a fully interactive experience with a next generation SIRI that would be far out ahead of what our current experience is (full interactive speech, memory, personalization, etc...). I remember the magic of the first mac and iphone and I suspect that he would have given us imind or something akin.

If you run a local model like qwen 2.5 coder non-stop 24/7 on a top-of-the-range Macbook Pro (M5 Max 128Gb), you'll get ~2 million output tokens a day.

Compare that to running the same model on the cloud at *today's* rates; it would take over a decade before your original investment is repaid.

There's a lot of assumptions being smuggled in here – I'd love to see them properly explored.