AI is not a tool I pick up and put down. It’s become completely ambient, embedded in every process I run at work, daily.

A couple of weeks ago, in the first AI Vistas conversation, I sat down with Nita Farahany, Eric Topol, Nicholas Thompson and Rohit Krishnan to discuss exactly this: do we use our tools, or do they use us? That conversation pushed me to lay out how my own thinking process has evolved.

There is a useful distinction here, drawn by researchers Shaw and Nave, between cognitive offloading and cognitive surrender. I used to know dozens of phone numbers by heart. Now I know two, and I don’t miss the rest. That’s cognitive offloading: a strategic delegation that costs nothing. Cognitive surrender is something different; an uncritical abdication of reasoning itself. And there is something about AI, about its allure and potency, that could make surrender far more widespread.

Thinking is my livelihood. If I stop thinking new things, I’m not doing my job. This week, I want to share how I navigate this.

Watch on YouTube or listen on Apple Podcasts or Spotify

What I outsource

Roughly 100 million tokens a day flow through systems my team and I have built and the most immediate change has been to my attention. Herbert Simon observed fifty years ago, that a wealth of information creates a poverty of attention. He was right, and the problem has only compounded since. I want to avoid missing an important signal, without drowning in everything else. So I built synthetic personas modelled on thinkers I value, Vinod Khosla for venture patterns, John Paulson for macro risk, Clayton Christensen for disruption logic, each scanning hundreds of items a week through their own intellectual lenses.

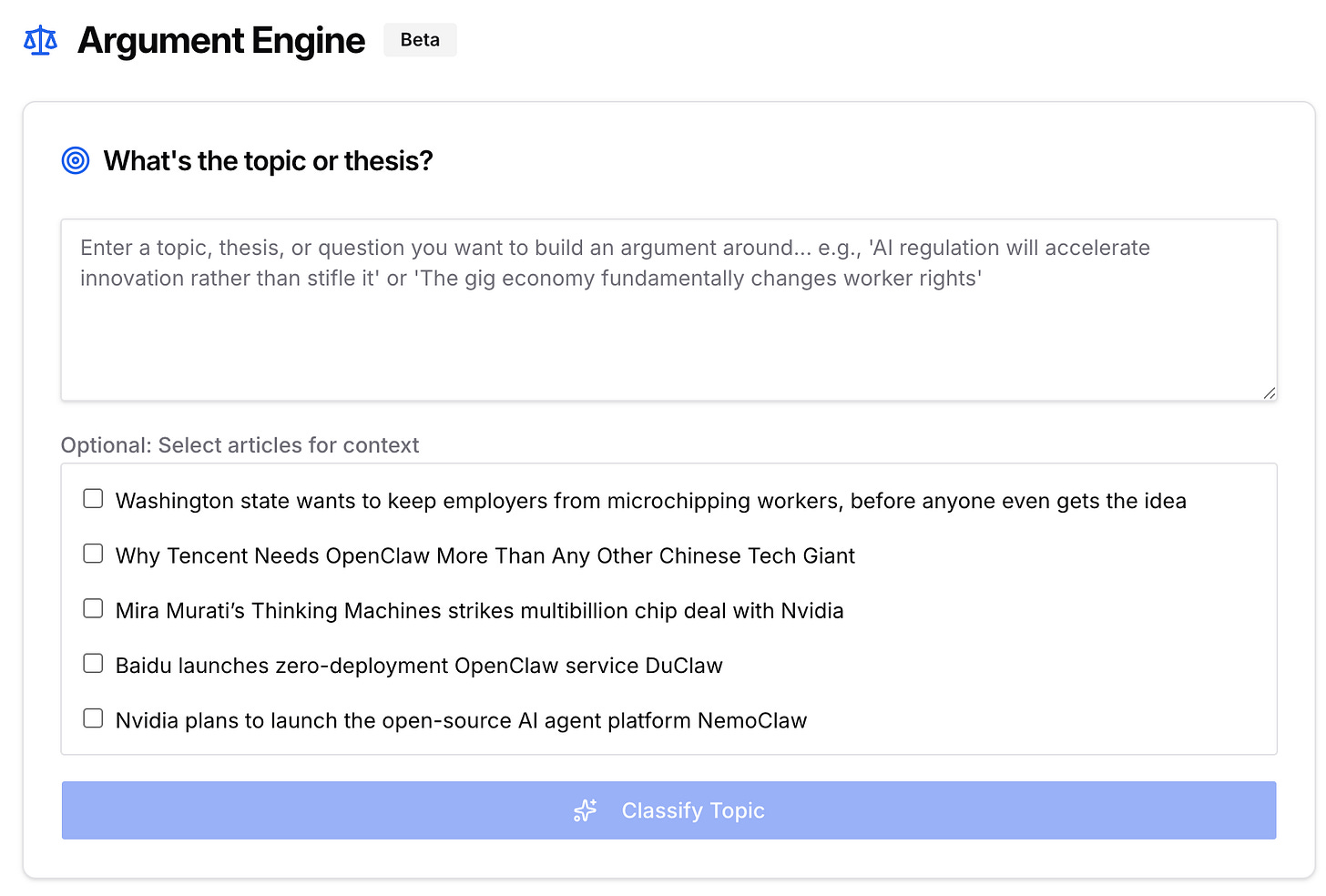

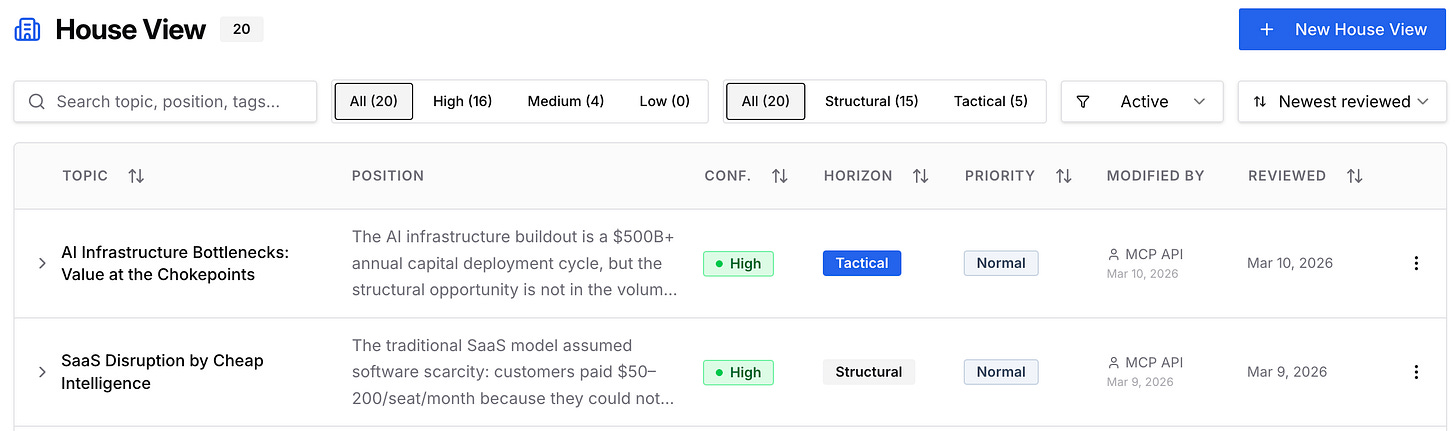

I've made an even bigger change to how I stress-test my reasoning. I’ll start writing and before I’ve finished the paragraph, an argument engine trained on 100,000 words of my writing might flag a structural weakness: I’m asserting where I should be evidencing. As a complement, House Views codify what we already believe at Exponential View, from learning curves to how Anthropic’s strategy differs from OpenAI’s, so a new argument faces challenge rather than confirmation. The friction is useful because it catches what I might miss.

On a bad day when I’m not doing my best writing, I will reach for a tool I built called The Stylometer, a Claude skill trained on 60,000 words of my prose, flags where sentences have gone slack and where the rhythm has drifted from my own voice. Synthetic editors interrogate the frame of the argument. The benchmark is always my own past best, not whatever I happened to produce that afternoon.

What I protect

What I’ve described above is the artificial scaffolding. It works because I deliberately and fiercely protect my actual thinking and what makes it mine.

The first thing I safeguard is the space where ideas arrive before they’re shaped. For me that’s a walk, a long shower or a blank piece of A4 in landscape mode with a fountain pen. These are the conditions under which something genuinely new can appear. I let the process be non-linear, messy and iterative, because straightening it out too early kills what’s interesting.

These moments are profoundly personal. They depend on a world model that took decades to build, assembled from every conversation, every argument I’ve lost, every book that changed how I saw something. That specific arrangement of experience and association belongs to no one else and can’t be replicated. I safeguard that lived interiority carefully, because the things worth saying tend to come from it.

And as Nita Farahany pointed out in the AI Vistas discussion

Once you figure out where your generative constituent of competence lives, that’s the thing you protect from offloading.

This is the best arrangement I’ve found for the work I need to do right now. Ten uninterrupted years of thinking would likely yield something different and perhaps better in many ways. And all of this will evolve in the coming years, so keep your minds open.

Azeem

Further reading

Shaw, Steven D., and Gideon Nave. “Thinking-Fast, Slow, and Artificial: How AI is Reshaping Human Reasoning and the Rise of Cognitive Surrender.” Available at SSRN 6097646 (2026). Research on cognitive offloading and surrender.

Kosmyna, Nataliya, et al. “Your brain on ChatGPT: Accumulation of cognitive debt when using an AI assistant for essay writing task.” arXiv preprint arXiv:2506.08872 4 (2025). On cognitive debt from LLM-assisted writing.

David Perell’s “How I Write” podcast. “Ezra Klein: The Case Against Writing With AI”. On the value of reading and writing manually.

Exponential View. “AI Vistas: Where the human ends and the AI begins” Our roundtable with Nita Farahani, Eric Topol, Rohit Krishnan and Nick Thompson.