🔮 LLM search & energy; Turing transformation; AI blockade on China; Funflation & Twitter demise ++ #444

Insider insights on AI and exponential technologies

Hi, I’m Azeem Azhar. As a global expert on exponential technologies, I advise governments, some of the world’s largest firms, and investors on how to make sense of our exponential future. Every Sunday, I share my view on developments that you should know about in this newsletter.

Latest posts

If you’re not a subscriber, here’s what you missed recently:

🪢 Inside the loop — On accelerating AI and geopolitical risk,

🐄 The cow and the academics — On AI disagreements.

Quick heads-up: The Exponential View Premium newsletter is updating its pricing. In six days, our monthly fee shifts from $12 to $19. But you still have a window to grab the current rate — and make the most of the savings by choosing the annual plan.

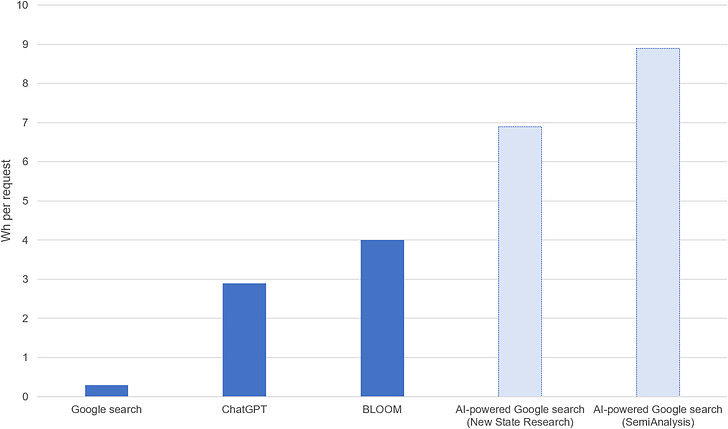

Sunday chart: The rising cost of LLM-based search

Large Language Models like ChatGPT are shifting the paradigm of information retrieval and search. These models go beyond merely providing a list of possible answers; they analyse, interpret, and contextualise queries, delivering nuanced and detailed responses. For instance, I regularly use Perplexity to help make sense of questions as diverse as tank survivability rates, what kind of lights I should get for my bookshelves, or understanding the regulatory burden across industrial sectors. LLMs have the potential to move beyond the search engine, towards synthesising information, a process I described three years ago in my essay “A Short History of Knowledge Technologies”.

However, this computational wizardry comes at a significant energetic cost. Each interaction with ChatGPT may consume up to 2.9 watt-hours (Wh) of energy, enough to boil two tablespoons of water and nearly ten times the 0.3 Wh energy cost of a standard Google search. And if Google replaced its current search algorithms with LLMs, SemiAnalysis estimates that each search request would cost up to 8.9 Wh of energy. Given Google’s 9 billion search requests per day this would lead to an annual energy use of 29.2 terawatt-hours (TWh) on their servers, equivalent to the entire annual energy consumption of Ireland.

Of course, several variables could offset this theoretical surge in energy use, including bottlenecks in server manufacturing, improvements in energy efficiency, and Google simply not wanting to pay 30 times the energy costs for a search request. Nevertheless, it illustrates how emerging technologies often come with heightened energetic demands.

As we look ahead, our digital world’s computational appetite shows no signs of abating - even without AI developments. Efficiency gains in data centres, which have historically helped mitigate increased energy consumption, are plateauing. MIT predicts that by 2030, data centres could devour up to 21% of the world’s total electricity supply, up from 1-1.5% currently. Other more conservative forecasts suggest energy demand will still increase by 67% by 2028. Either way,h new technologies and the demand for computation will pressure the energy transition.

Yet this pressure can often stimulate the quest for greater efficiency. We’ve seen this dynamic play out in cryptocurrency. Initially, blockchain technologies like Ethereum relied on energy-intensive proof-of-work mechanisms. As energy concerns mounted, the industry pivoted towards more sustainable proof-of-stake models. We are already seeing promising avenues within AI, such as gains in the performance of smaller models. However, this cycle of new technologies raising energy consumption before efficiencies eventually kick in will likely continue into the future.

Of course, the long-term goal is to make these concerns irrelevant in an age of abundant, clean energy — we’re looking at you, nuclear fusion.

Key reads

Turing Trap or Transformation? Agrawal et al. argue that the framing of AI automation versus augmentation is wrong. Rather than being distinct they are often one and the same. They say that AI, initially intended for automating tasks, inadvertently acts as a force for augmentation of the broader workforce. For example, automating diagnostic skills in healthcare could diminish demand for specialised physicians while amplifying career opportunities for the much larger population of nurses and other medical professionals. The automation of high-value skills can democratise access to those skills, enabling those with generic abilities to enter previously specialised roles. This contrasts with technologies once thought to be augmenting, like computing and the internet, which, as authors argue, have historically favoured the educated, leading to increased inequality. The punchline merits reflection:

Perhaps the best targets for computer scientists and engineers looking to build new systems is not to find intelligences that humans lack. Instead, it is to identify the skills that generate outsized income and build machines that allow many more people to benefit from those skills.

See also: GenAI could provide a way for scientists to rethink how they interrogate and summarise experimental results.

Economic warfare. The U.S. Commerce Department is considering blockading China from a broad category of AI programs. This extends the blockade on AI hardware to ‘frontier models’ that industry leaders like Google and OpenAI are seeking to develop.1 This policy, aimed at curbing China’s progress, is looking to widen the decision-making gap—as described in my recent essay— between the two countries. That said, this perspective assumes that possessing advanced AI models is an all-or-nothing scenario, akin to having nuclear weapons. An alternative viewpoint suggests that due to the rise of open-source development and increasingly efficient, smaller AI models, a country could maintain competitive capabilities in AI even without access to the most advanced technologies. If this is the case, these restrictions are only likely to inflame China relations without much benefit.

See also:

A research paper exploring the GPT-4 Vision’s various abilities

AI wrapped. The recently released State of AI Report from a friend of EV, Nathan Benaich, is an essential read for anyone wanting to grasp the pivotal developments in AI for 2023—a year bustling with advancements (as you well know). There is too much to summarise in a paragraph. Rather, I recommend you lean back and read it as a reference for the next few months.

Market data

Most legacy car manufacturers are finding it challenging to meet the UK government’s target for 2024, which mandates that 22% of their new car sales should be electric.

To address climate change, Singapore is investing $73 billion, which translates to $100 million per square kilometre of the country’s land area.

Google has successfully mitigated the largest Distributed Denial of Service (DDoS) attack ever recorded, with an unprecedented peak rate of over 398 million requests per second. This is 7.5 times larger than the previous largest attack.

Harvard University ranks at the bottom of the college free speech rankings, as compiled by the Foundation for Individual Rights and Expression. They received the lowest score ever.

After exiting Twitter, NPR experienced only a 1% drop in web traffic. It’s worth mentioning that Twitter previously accounted for just under 2% of NPR’s overall traffic.

Short morsels to appear smart at dinner parties

👾 Effective altruism, existential AI risk and Washington. Tech billionaires obsessed with existential risk are driving the policy agenda through a web of think-tank relationships. Not just in Washington, I would add — I see signs of this in the UK.

🏃🏽♂️ ChatGPT evaluated my running style based on a photo… of the sole of my running shoe.

🌐 The net neutrality debate is back.

🎶 Taylor Swift, Beyonce & funflation.

🧠 The largest map of the brain ever made.

🏈 No revenge for nerds? Evaluating the careers of Ivy League athletes.

🛡️ The fight to confuse data scrapers.

🚦 How Google is reducing traffic (road not search).

🧬 Researchers have used gene editing to create chickens resistant to avian flu.

Community events

😎 We are hosting another AI in Praxis event on 26 October to share the best practices for using AI in daily work and life. The event is open only to members of EV with an annual subscription. If you would like to present your use case on the day, please fill out this form.

End note

It’s become pretty clear that X, once the mighty Twitter, is spiraling, and not in a good way - something that, frankly, we could see coming. Misinformation is running wild. Numerous journalists have pointed out how X lets fake news, especially stuff about Israel/Gaza, run rampant while clamping down on legitimate sources.

For me, it’s losing its utility, fast. This is a big loss, as it’s been a core part of my professional learning for more than a decade. My tweet visibility has nosedived by around 70% since the takeover, and I’m guessing it’s because people in my network are using the service less. The platform’s deterioration is noticeable and substantial. Plus, the new head honcho? Not exactly a product whiz. One estimate suggests the firm has lost 80% of its value since the Musk-uisition.

I’m starting to think there might not be a path back to glory for X. In the meantime, I’m branching out to other networks like Threads and Bluesky. Hope to connect with you there!

Azeem

P.S. Thanks for all the fantastic feedback on my playlist, An Ambient Letter. More than 100 of you are following it.

What you’re up to — community updates

Chanuki Seresinhe is gearing up to crowdsource funds for Beautiful Places AI, harnessing machine learning and crowdsourced insights to capture the essence of outdoor beauty, starting with the UK. Join the upcoming webinar to learn more about the project and how you can support it.

Tony Curzon Price has been appointed to the board of Ofgem, the UK’s energy regulator. (Now we know who can help us reform the UK’s electricity market for a renewable future, rather than one besieged by gas interests.)

Ken Pucker recently contributed an article to the Stanford Social Innovation Review, titled “A Realist’s Guide to Investing for Good.” (Spoiler: It’s probably not an ESG fund).

Eliot Peper has released his new novel, Foundry, a near-future espionage thriller about how semiconductors are refactoring geopolitics!

Share your updates with EV readers by telling us what you’re up to here.