🔮🇨🇳 Inside the Chinese AI labs where America’s AI controls created its toughest competiton

We put a number to China’s efficiency moat

We spent a week on the ground in China, visiting AI and robotics labs and seeing how things operate firsthand. We traveled through Beijing, Hangzhou and Shanghai to meet representatives from 14 labs, including DeepSeek, MoonshotAI, MiniMax, Z.ai, ByteDance, 01.AI, Alibaba, Ant Group, Xiaomi, AInnovation, Galbot, Unitree, ModelScope, and RWKV; joined by our friends Kevin Xu, Lily Ottinger, Florian Brand, afra, Kai Williams, Lingua Sinica, Jasmine Sun, Caithrin and Nathan Lambert who made it all happen.

We participated in dozens of hours of discussions with researchers, founders, product leaders, and business owners across the infrastructure, hardware, models and application layers. Every lab is obsessed with ByteDance’s Doubao, and respectful of DeepSeek’s scientific process. Claude is the model of choice for coding, universally rated as the best thing out there. The researchers we met were humble, welcoming, focused purely on technical priorities and building the next big model. Researchers were also very young: at one lab in particular, the average age was 25.

But chip constraints are real. Everyone wanted Nvidia chips, and the constraint was showing up in longer and longer pre-training runs and iterative cycles.

We arrived in China and really wanted to understand how severely export controls were biting and how much they were harming AI development. The stock of AI compute in China is running two to three years behind that of the US. In the short term, it’s clear that the controls are making it harder.

But, as we discovered, it’s not as obvious over the long term.

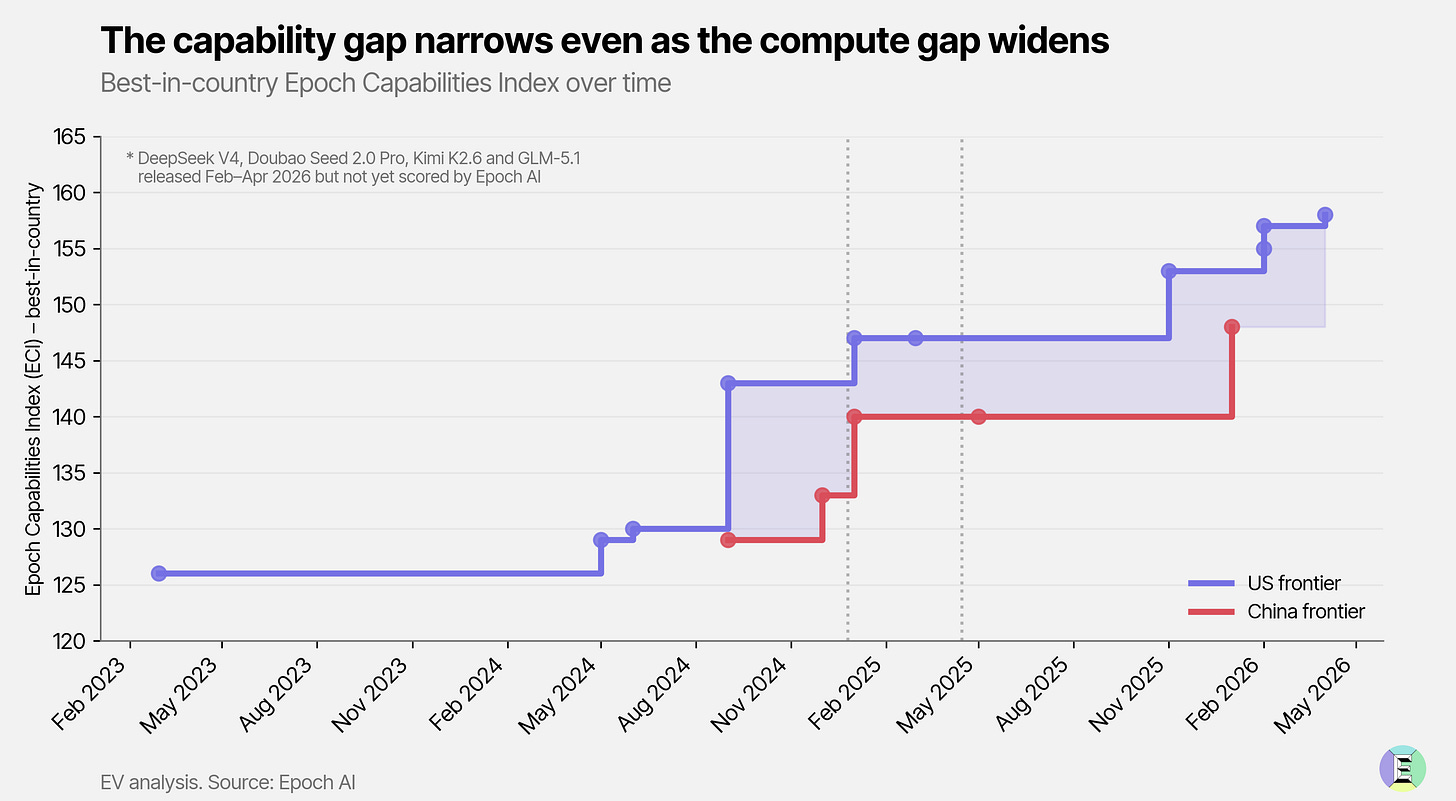

The export controls have become capability-generating – labs in China are forced to be ruthlessly efficient. Despite the three-year compute handicap, Chinese open-source models are only six to eight months behind the US frontier.

By listening to the researchers and digging into the data, we have estimated quantitatively just how significant that efficiency capability is. We reckon Chinese labs are extracting 4-7x as much intelligence per unit of compute as naive scaling predictions would suggest.

On the day a sitting US president lands in Beijing for the first time in nearly a decade, we decided to publish our research into how and why the constraints have inadvertently created the conditions for the most formidable competitors to develop exactly the capabilities that will matter most in the coming years.

The compute gap, the US lead

In every meeting with every Chinese lab, we heard a common refrain: we do not have enough compute. Less compute means fewer experiments and smaller models. This is a genuine constraint on research, development and deployment of AI.

That isn’t surprising. American researchers complain, too. So do the business people, Microsoft and Anthropic, for example, have explicitly stated that the lack of compute capacity has cost them meaningful revenue.

But the compute constraint in China is different. It isn’t just that there is less capital around – China’s AI startups raised $12.4 billion in 2025 compared to $285 billion in the US. It is that export controls on chips, initiated by Joe Biden in October 2022 and consequently relaxed and tightened at various times by President Trump, have all but choked off the supply of advanced chips to the Chinese market. The interesting thing to us was seeing firsthand how local labs have responded.

Let’s step back for a moment first to put into context the compute gap.

US labs trumpet securing large amounts of compute. In recent weeks and months, Anthropic alone has signed deals totaling over 10 gigawatts of capacity – with Amazon, Google, Microsoft, Nvidia and SpaceX. OpenAI committed to 10 gigawatts of Nvidia systems last September, backed by up to $100 billion in Nvidia investment. These orders are now exclusively for the latest, most powerful silicon: Nvidia’s Blackwell series (B200, B300, BG200) shipping today and the next-generation Vera Rubin platform arriving later this year, and increasingly Google TPUs and others.

These large orders simply are not options for Chinese hyperscalers and labs. Supply isn’t entirely dry, Chinese customers are still getting their hands on Nvidia’s H100s, B200s and B300s. These are coming through from Singapore, by and large, via shell companies where shipments are relabelled as tea or toys. But quantities are at least an order of magnitude below their US rivals.

It is these top-tier American chips, especially the most recent vintages, that count. A single GB300 NVL72 rack (72 of Nvidia’s latest GPUs operating as one system) delivers 30x faster real-time inference than the equivalent H100 cluster from three years earlier, with 3.6x more memory per chip and 25x lower energy per inference. US labs are now ordering these systems by the gigawatt. Chinese labs cannot.

Chinese tech firms, notably Huawei, have made strides in building chips suited for AI. But even Huawei’s latest, the Ascend 950PR, launched in March, is roughly on par with the H100, released in 2022. The systems are shipping in far smaller volumes. NVIDIA is estimated to have shipped 7 million Hopper and Blackwell GPUs through October 2025 alone and the rate is increasing. Huawei plans to ship 750,000 Ascend 950PR chips this year, which is still around a tenth of what Nvidia shipped last year.

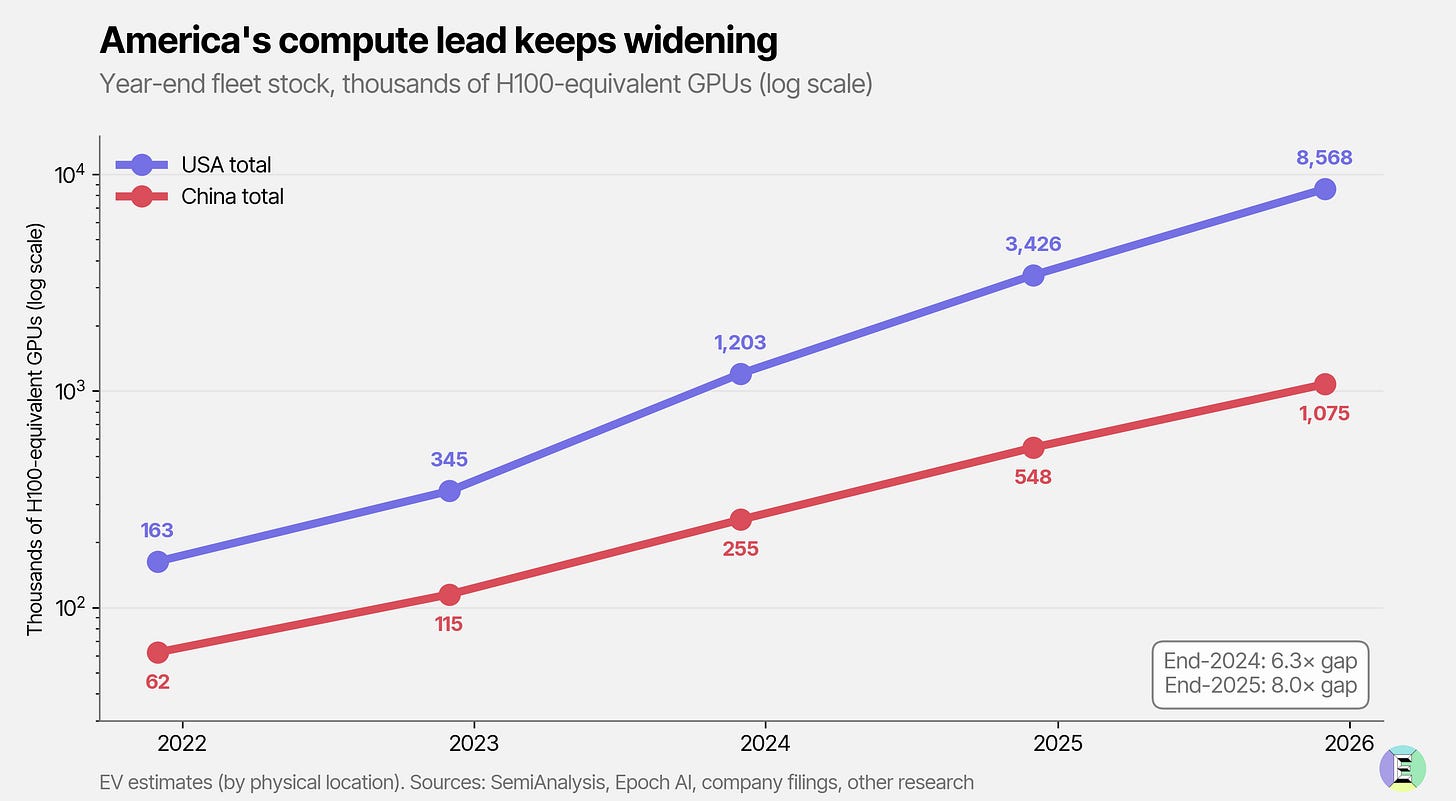

The result is that the US has a staggering lead in deployed AI compute capacity.

The lead is widening, not shrinking. In 2023, the US AI sector had triple the amount of deployable compute–almost all of which would have focused on training AI models. By the start of this year, that gap was closer to eightfold.

Put differently, by the end of 2025, Chinese labs could likely access roughly the same scale of compute that the US enjoyed two years earlier.

The difference is how that compute is used. In 2023, most American capacity was tied up in training, not serving customers. By contrast, in 2025, China’s compute stack, augmented by data centers in Malaysia and Singapore, was doing double duty – supporting model training and serving hundreds of millions of consumers, and a rapidly growing base of enterprises, through apps like WeChat, Doubao and Alipay.

It’s important to separate compute capacity for training AI models from serving customers. China has a huge AI market. Doubao alone reaches 100 million daily active users. Token volumes are equally vast. By February 2026, we estimate Chinese token volumes had reached ~9 quadrillion tokens a month – compared to ~4 quadrillion across the main US/Western providers.

Alongside datacenters in Malaysia and Singapore, a large part of Chinese compute infrastructure is going to serve customers through inference. If half of the compute is used to serve those customers, that reduces the available compute for training models. We might conclude, with low confidence, that by the end of 2025, Chinese labs had as much compute available for model training as American labs did in mid-2023.

By that logic, the performance of models from Chinese labs should be at least two years behind American if labs in both countries are using the same approach – more computing and more data to build better models1. The framework treats capability as a function of compute, holding training efficiency roughly constant.

But we aren’t seeing a 2-3 year gap.

The headline is that Chinese models are three to six months behind the US on benchmark performance, according to DeepSeek, and 8 months according to the Center for AI Standards and Innovation, a US government agency.

In fact, Chinese labs appear to be keeping pace with, or perhaps even narrowing the gap in some ways, with US labs. The question for us then became: are the capability headlines wrong, or is something closing the gap that the computed numbers don’t capture?

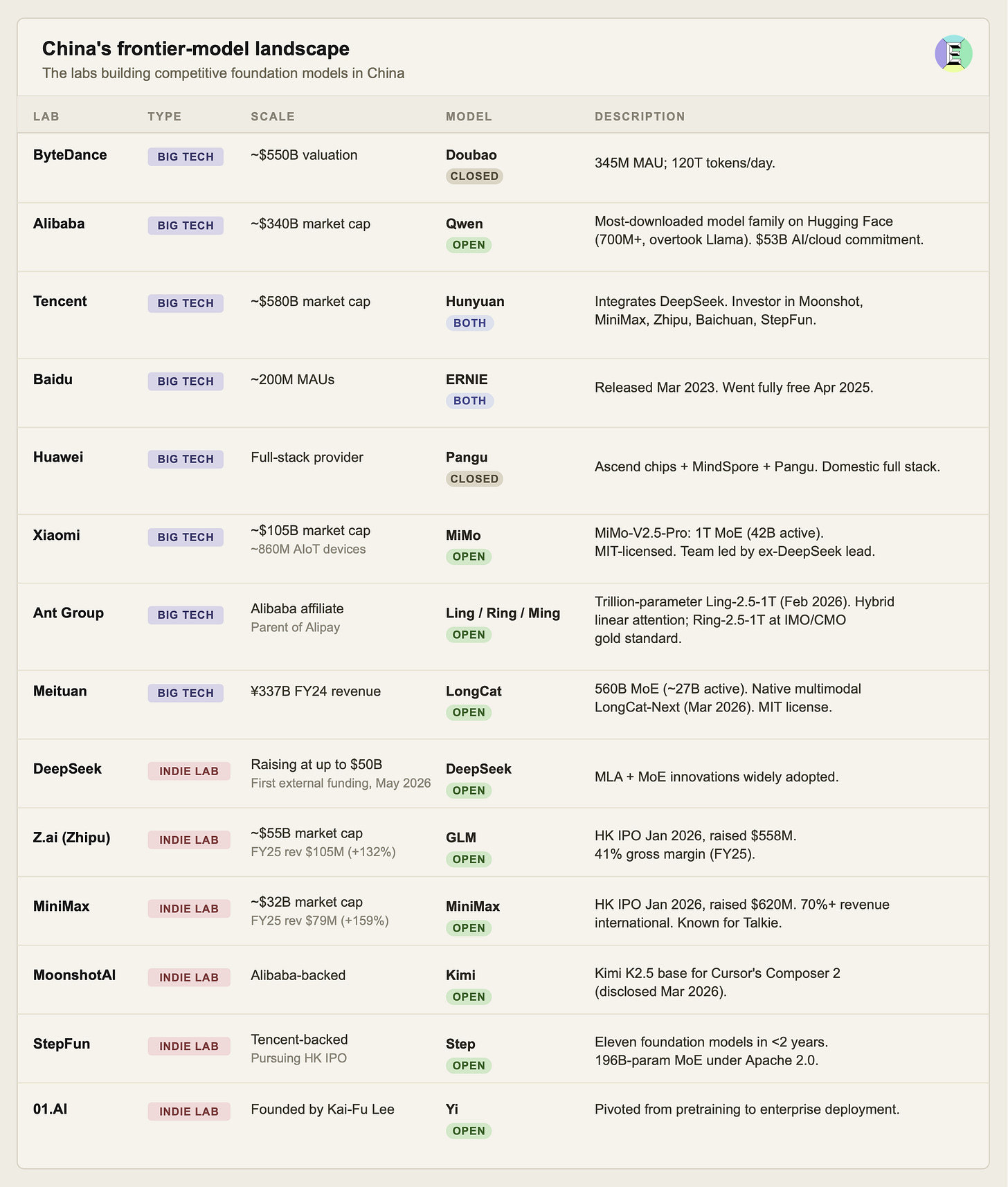

There is an additional wrinkle: market structure. In the US, five key frontier labs dominate training compute: OpenAI, Anthropic, Google DeepMind, Meta and xAI. In China, a thousand flowers are blooming. The big tech firms are developing their own frontier models:

And even larger firms are coming in, either because they have specific data and expertise, such as Ant Financial, with their Ling series, or Meituan, known for its on-the-go delivery-retail platform, which has also entered the LLM development market.

The impact of so many firms training their own models is that the pool of compute is being divided still further.

The efficiency moat strikes back

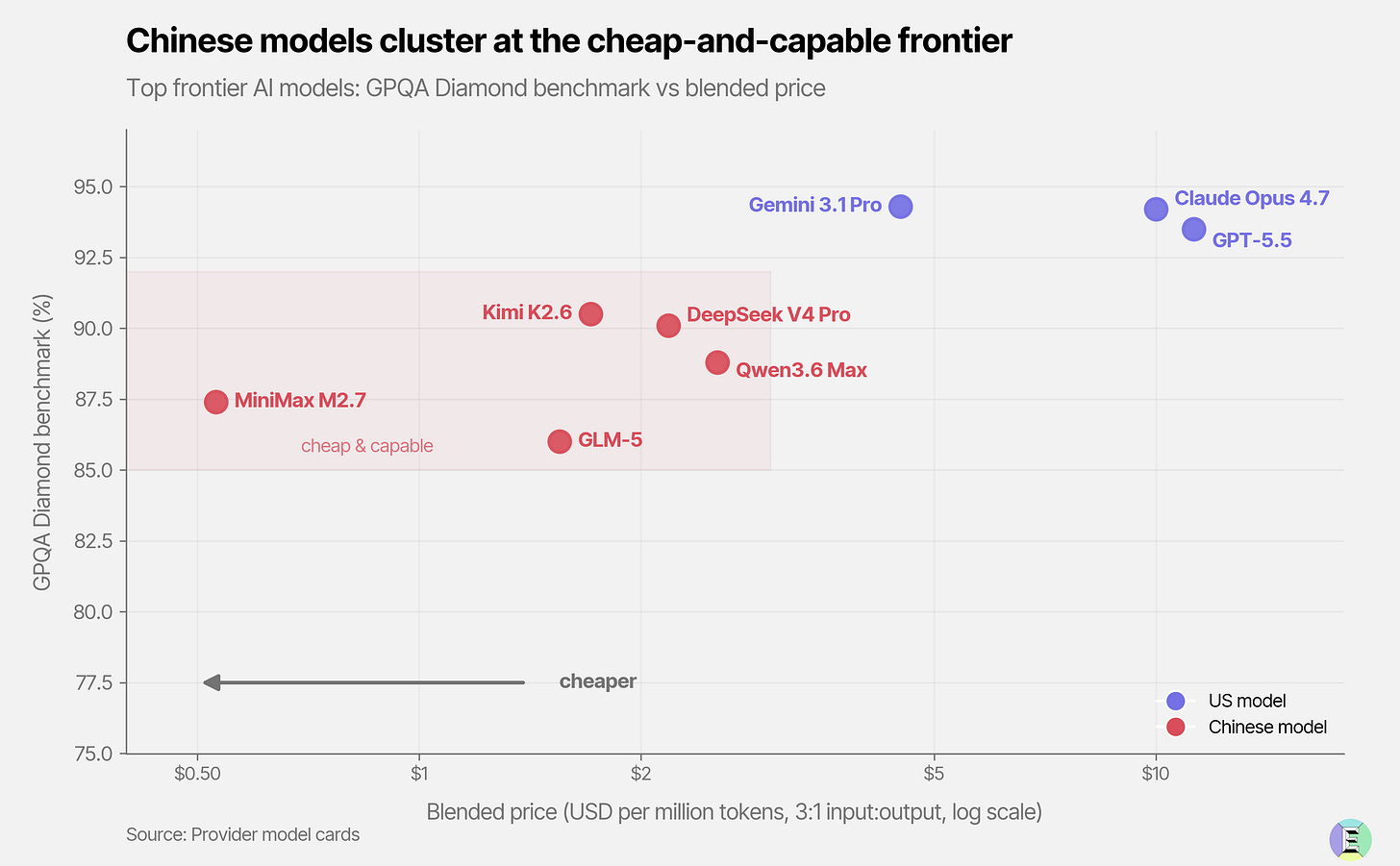

These labs are clearly finding efficiencies in training performant models. The efficiencies are actually being passed through to the models’ inference. That is, when they’re being used to serve customers, because they are much cheaper than roughly equivalent American models.

One shouldn’t put too much weight on AI benchmarks. They can be gamed, and they might not easily reflect how a models “feels” or works in practice. But they are one inadequate reference point. DeepSeek’s V4 Pro, their flagship model, is comparable to Claude’s Opus 4.6, in some ways. Opus 4.6 was released in Feb 2026, and is not Anthropic’s latest model. Cost-wise, though, you can see the difference. DeepSeek charges $0.43 per million input tokens and $0.87 per million output tokens. Opus 4.6 is 11 times more costly in input and 28 times more expensive in output.

These are not promotional one-offs. Across the Chinese frontier, Kimi K2.6 sits at $0.95 input (among the cheapest models in the global top 10 by GPQA Diamond), and Alibaba’s Qwen models are priced in a similar band. The cost-to-serve inference is a function of three factors: the actual cost of serving the model, its compute complexity and energy costs, and the margin the provider is willing to give up.

The margins appear largely healthy. Z.ai serves its GLM-5 model at $1.00 per million input tokens, which is 3x cheaper than Claude Sonnet 4.6, and 5x cheaper on output. Despite this, it boasts a 50% gross margin, and MiniMax enterprise margins sit at 70%, though we don’t know if this holds across the board. DeepSeek, for its part, ran for years on internal funding alone, only turning to outside capital this month.

Finally, that efficiency shows up in how easily these models run on consumer hardware like laptops and phones. The leading local models in the world are almost all Chinese open-source, aggressively distilled down to smaller, lighter variants. A 5GB Qwen3-8B model runs on my Mac, as does DeepSeek R1’s 7B distilled variant, which has been pulled 85 million times on Ollama, the second-most-downloaded local model in the world. Cursor even built its Composer 2 model on top of MoonshotAI’s Kimi K2.5 model. The only US-based open-source model we run locally is Google’s Gemma 4.